Tensorflow /usr/local/share/jupyter/kernels/tensorflow

Pyspark2 /usr/local/share/jupyter/kernels/pyspark2 Pyspark /usr/local/share/jupyter/kernels/pyspark Python2 /usr/lib/python2.7/site-packages/ipykernel/resourcesĬaffe /usr/local/share/jupyter/kernels/caffe The first thing to do is run a jupyter kernelspec list command, to get the list of any already available kernels in your machine here is the result in my case (Ubuntu): $ jupyter kernelspec list (Don't get me wrong, it is not your fault and I am not blaming you I have seen dozens of posts here at SO where this "solution" has been proposed, accepted, and upvoted.).Īt the time of writing (Dec 2017), there is one and only one proper way to customize a Jupyter notebook in order to work with other languages (PySpark here), and this is the use of Jupyter kernels. Well, it really gives me pain to see how crappy hacks, like setting PYSPARK_DRIVER_PYTHON=jupyter, have been promoted to "solutions" and tend now to become standard practices, despite the fact that they evidently lead to ugly outcomes, like typing pyspark and ending up with a Jupyter notebook instead of a PySpark shell, plus yet-unseen problems lurking downstream, such as when you try to use spark-submit with the above settings. WARNING | Unknown error in handling startup files: When I did this, the error then became: WARNING | Unknown error in handling PYTHONSTARTUP file /my/path/to/spark-2.1.0-bin-hadoop2.7/python/pyspark/shell.py: I created a short initialization script init_spark.py as follows: from pyspark import SparkConf, SparkContextĬonf = SparkConf().setMaster("yarn-client")Īnd placed it in the ~/.ipython/profile_default/startup/ directory I also tried to create my own start-up file using this post and I quote here so you don't have to go look there: Just want to clarify: There is nothing after the colon at the end of the error. When I create a new Python3 notebook, this error appears: WARNING | Unknown error in handling PYTHONSTARTUP file /my/path/to/spark-2.1.0-bin-hadoop2.7/python/pyspark/shell.py: When I type pyspark, it launches my Jupyter Notebook fine. When I type /my/path/to/spark-2.1.0-bin-hadoop2.7/bin/spark-shell, I can launch Spark just fine in my command line shell.

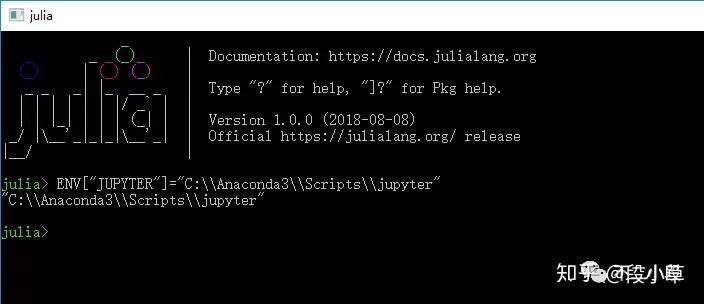

bash_profile looks like: PATH="/my/path/to/anaconda3/bin:$PATH"Įxport PYTHON_PATH="/my/path/to/anaconda3/bin/python"Įxport PYSPARK_PYTHON="/my/path/to/anaconda3/bin/python"Įxport PATH=$PATH:/my/path/to/spark-2.1.0-bin-hadoop2.7/binĮxport PYSPARK_DRIVER_PYTHON_OPTS="notebook" pysparkĮxport SPARK_HOME=/my/path/to/spark-2.1.0-bin-hadoop2.7Īlias pyspark="pyspark -conf =/home/puifais -num-executors 30 -driver-memory 128g -executor-memory 6g -packages com.databricks:spark-csv_2.11:1.5.0" You can find installation instructions for Miniconda on their website, but if you're using Linux (e.g.I've spent a few days now trying to make Spark work with my Jupyter Notebook and Anaconda. You can skip Miniconda entirely if you prefer and install Jupyter Lab directly, however, I prefer using it to manage other environments too. It is a small, bootstrap version of Anaconda that includes only conda, Python, the packages they depend on, and a small number of other useful packages, including pip, zlib and a few others. Miniconda is a free minimal installer for conda. One approach I can recommend is to install and use Miniconda. There are many different ways to get up and running with an environment that will facilitate our work. We will write all of our code within a Jupyter Notebook, but you are free to use other IDEs.įigure 1 - A Jupyter Notebook being edited within Jupyter Lab. We will be using Rust along with packages that will form our scientific stack, such as ndarray (for multi-dimensional containers) and plotly (for interactive graphing), etc. As such, we need the right tools and environments available in order to keep up with the examples and exercises. We are taking a practical approach in the following sections.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed